Self.endheaders(body, encode_chunked=encode_chunked)įile "/usr/local/lib/python3.6/http/client.py", line 1286, in endheaders Self._send_request(method, url, body, headers, encode_chunked)įile "/usr/local/lib/python3.6/http/client.py", line 1337, in _send_request

The problem is that group 0 is the root group and most likely I'll not get permission to add the user to it) ERROR - Task failed with exceptionįile "/home/airflow/.local/lib/python3.6/site-packages/urllib3/connectionpool.py", line 706, in urlopenįile "/home/airflow/.local/lib/python3.6/site-packages/urllib3/connectionpool.py", line 394, in _make_requestĬonn.request(method, url, **httplib_request_kw)įile "/usr/local/lib/python3.6/http/client.py", line 1291, in request (I still need to check if adding the user to group 0 at the host would solve the issue as pointed out by in the his comment. Task run fails, which is related to the fact that the default user doesn't have the needed permissions to /var/run/docker.sock. Using the above-mentioned setup with the official airflow image 2.1.4 and DockerOperator.

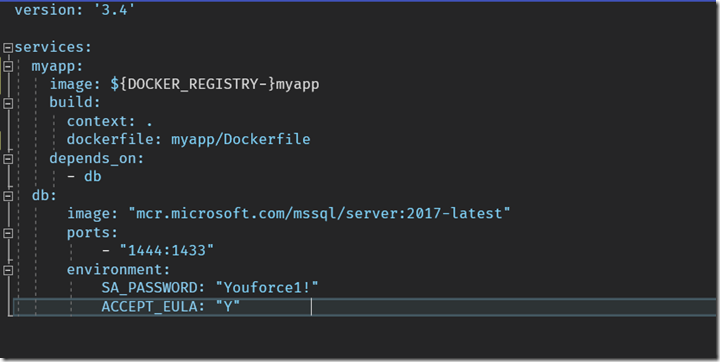

The /var/run/docker.sock is mounted into the containers. At the host, my UID belongs to docker group, but it doesn't belong to group 0. I'm launching the whole setup from a docker-compose.yml where I set AIRFLOW_UID to match my UID at the host machine and AIRFLOW_GID to 0 as suggested in the airflow documentation. At the moment, I'm testing the setup at one machine, but in the end I'll run the celery worker containers at separate machines operating in the same network sharing some of the airflow specific mount points(dags,logs,plugins) and user ids etc. The main goal is to run container based processing(using the DockerOperator) when the airflow celery worker is also running inside a docker container. (THE ORIGINAL QUESTION WAS EDITED TO MAKE IT MORE CLEAR)

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed